Material segmentation using UNet and VGGNet

Introduction

This project explores the use of deep learning for automating the segmentation of tomographic X-ray images, reducing the need for labor-intensive manual processing. Using U-Net and VGGNet-based models, we evaluated their effectiveness in segmenting microstructures in scientific imaging. Our findings show that while U-Net excels when trained from scratch, pre-trained VGGNet models demonstrate superior generalization capabilities, making them valuable for domains with limited labeled data. The project highlights the potential of deep learning in enhancing image analysis efficiency in material science and medical imaging, especially when labeling resources are limited.

Research Question

Can pre-trained deep learning models improve the automation of X-ray image segmentation, particularly in domains with limited labeled data?

Methods

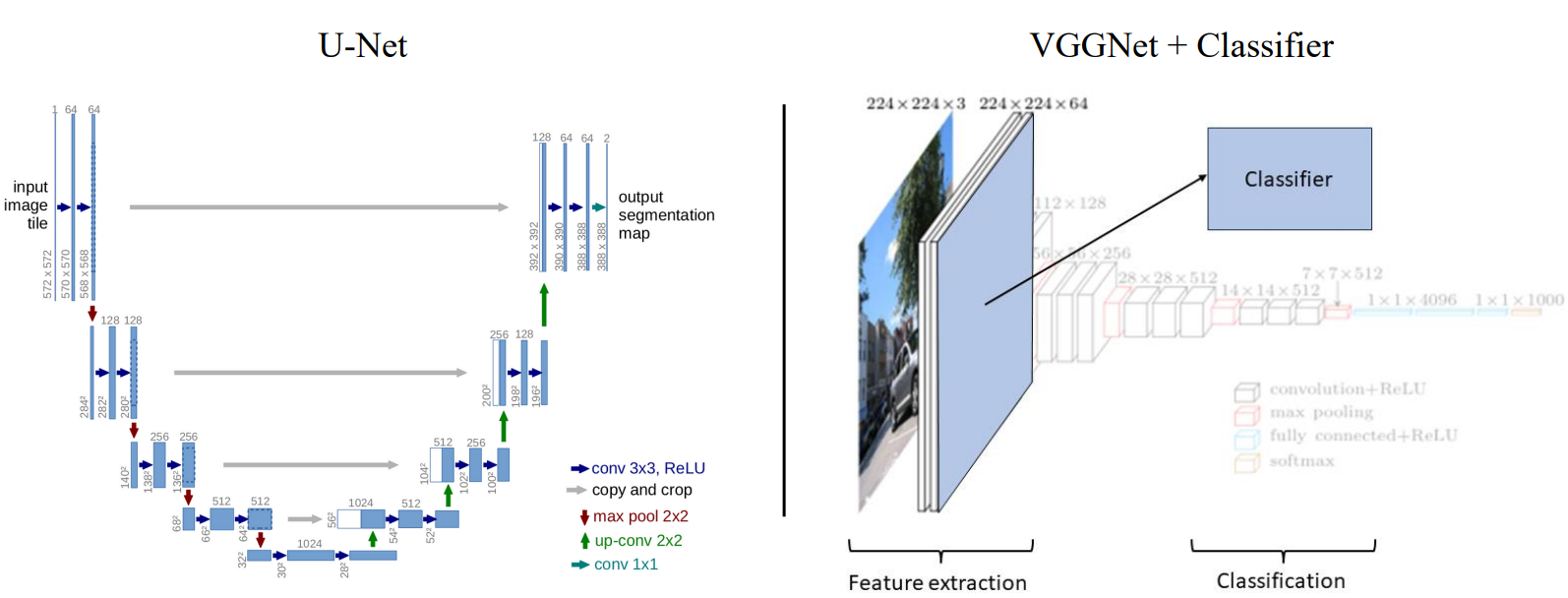

This study applied two deep learning architectures—U-Net and a VGGNet-based model—to segment microstructures in X-ray images. The project was conducted in two phases:

Training a U-Net model from scratch on a dataset of 500 images of solid oxide cell (SOC) microstructures, using a supervised learning approach. The model was optimized with cross-entropy loss and the Adam optimizer. Evaluating generalization ability by testing the trained U-Net on a second dataset of battery electrode images and comparing its performance against a pre-trained VGGNet + Classifier model. The VGGNet, originally trained on ImageNet, extracted image features, which were then classified using both a neural network and an XGBoost classifier.

Key Findings

- U-Net achieved high accuracy (F1-score: 98.72%) on the first dataset, confirming its effectiveness in segmenting well-defined microstructures with sufficient training data. However, its performance dropped significantly (F1-score: 82.73%) when applied to the second dataset, indicating limited generalization ability.

- VGGNet + Classifier outperformed U-Net in cross-dataset generalization, achieving F1-scores of 89.19% (XGBoost) and 91.12% (Neural Network) on the second dataset. This suggests that pre-trained feature extractors, even from unrelated domains like ImageNet, can be highly effective in microstructure segmentation.

- The results highlight that the amount and diversity of training data matter more than the complexity of the model architecture. The VGGNet’s success suggests that pre-trained networks can effectively transfer knowledge to new domains, reducing the need for extensive labeled data.

- Future work could focus on training a U-Net on a much larger segmentation-specific dataset, such as COCO, to improve its generalization ability.

Conclusion

This project demonstrates that deep learning can significantly improve X-ray image segmentation, with pre-trained models offering a viable solution for data-scarce domains like material science and medical imaging.